Most would associate summertime with a relaxing and leisurely season of the year. However, HPC centres like SciNet, as in many others around the world, perceive this differently and are actually quite busy during this period.

Among the many activities SciNet carries out during the summer “break” are workshops and short courses. These activities are scheduled in the summer to fit between the term-long courses that SciNet offers to graduate students at the University of Toronto.

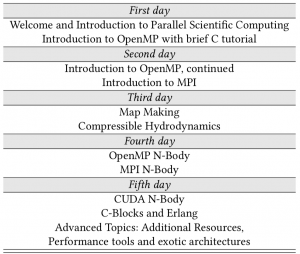

In particular, one of SciNet’s oldest training activities is a one-week intensive school on high-performance and technical computing. This annual summer school is our flagship training event, and is aimed at graduate students, undergraduate students, postdocs, researchers and occasionally even faculty members, who are engaged in compute intensive research. SciNet’s first such summer school was given in 2009, at which time it was called a “Parallel Scientific Computing” workshop. This first version of the school was heavily focused on parallel programming and applications in astrophysics.

These days, SciNet’s summer school is part of the Compute Ontario Summer School on Scientific and High Performance Computing. Held geographically in the west, centre and east of the province of Ontario in Canada, the summer school provides attendees with the opportunity to learn and share knowledge and experience in high performance and technical computing on modern HPC platforms. The central edition is the continuation of the SciNet summer school.

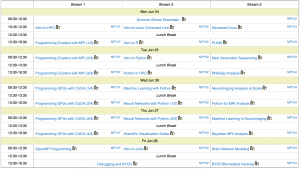

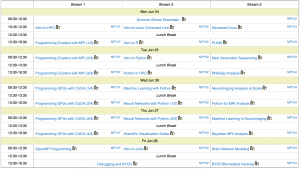

Not only is the school organized in a wider context, its program has expanded as well. In the last three years, the Toronto edition has had three streams with a wide variety of topics, from shell programming to data science, machine learning and neural networks, biomedical computing, and, still, parallel programming.

The type of training offered at the summer school is very practical, with a lot of hands-on exercises and live coding. This practical approach is very typical for most of SciNet’s courses but takes its ultimate form during the summer school instruction.

In addition to the training that participants received, the school also offers the opportunity of participants to interact with other participants, as well as the instructors, exchange ideas or discuss about current problems they are trying to solve. In fact, since a couple of years, the program includes focused sessions such as “Bring your own code” and “Bio-Hacking”, where this sort of interactions are not only promoted but the main theme.

Our summer school has the add-on feature of being absolutely free of charge for participants! That’s something we believe is quite important for several reasons, but mostly because we believe that in this way we can reach more researchers from fields that are relatively new to doing computational research.

This type of event not only benefits the students and participants of the summer school, but also enables collaborations between departments and consortia, as part of the training was delivered in partnership with colleagues from SHARCNET and the Centre for Addiction and Mental Health.

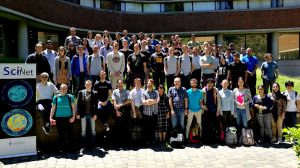

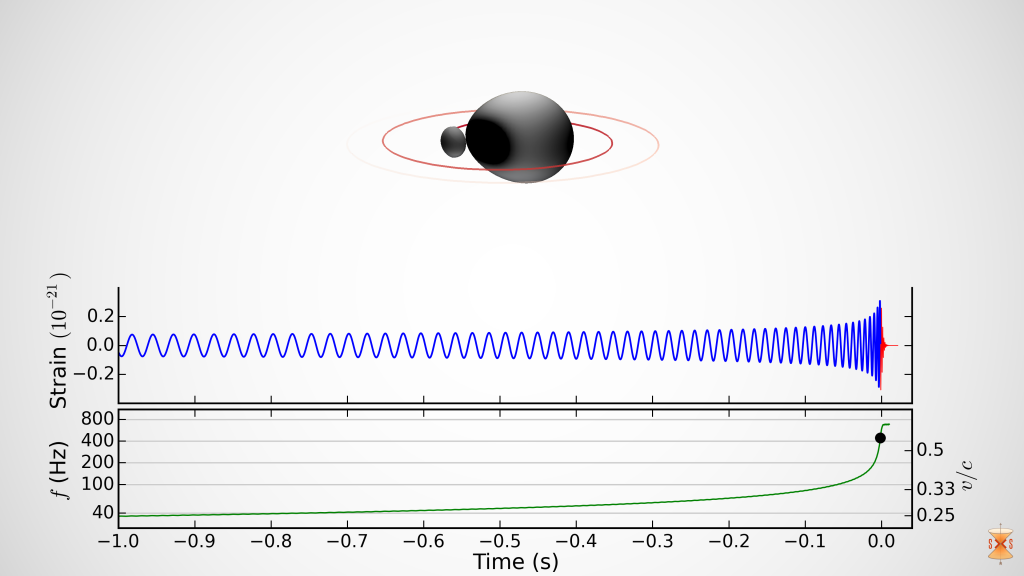

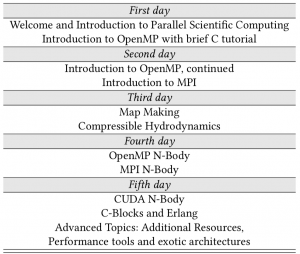

SciNet’s first summer school in 2009 focussed on Parallel Scientific Computing and placed emphasis on scientific applications such as in astrophysics. |

SciNet’s latest (and largest) summer school, held in June 2019. This summer school had three parallel streams: the traditional High-Performance Computing, one on Data Science and a stream on BioInformatics/Medical applications, which was added in 2017. Details of the courses covered in the school can be found in SciNet education website: SciNet.courses/438 |

Logistics and Organizational details of the Summer School

There is no simple recipe to make a successful summer school that attracts and retains motivated participants for five full days, but below are a few necessary ingredients.

Sessions and instructors… Coming up with a program of three streams with sessions on scientific computing, parallel programming and data science is a challenge, but finding the excellent instructors for them is an even greater challenge, especially in summer, when many people are away.

Nonetheless, the summer school has been able to grow from a single-stream offering of 100 lecture hours in 2014 to a three-stream program with nearly 300 lecture hours in 2019. Luckily, we are not limited just to SciNet staff for instructors, but get help from the people from SHARCNET and CAMH as well.

Rooms… Organizing a training section of one-week long from Monday to Friday starting at 9:30am and finishing 4:30pm, offers a lot of challenges. For starting, finding rooms (not only one, but actually three –as there are three parallel concurrent sessions), ideally on the same building and each of them able of hosting around a hundred people, with proper power outlets, AC capabilities, and comfortable enough is a task far from trivial. We manage to do this, again with the effort of our instructors and staff who start to look into booking rooms months in advance… again summertime is not that “quiet and relaxing time” people may think of at the university premises…

Taking attendance… We issue certificates for those participants that attend at least three days. This requires that we record the attendance of the participants for every session every day. In the initial summer schools, where there were one or two parallel sessions at most, and the total number of participants wasn’t too large, we used a paper signing list, where students self-reported their attendance. By the end of the week we would collect and count these lists and manually awarded certificates.

But with 3 parallel streams and more than two hundred participants, the task of manually sorting out attendance has become unfeasible. To tackle this issue, we developed a system using our own education website, where we ask the participants to take a “test” selecting from 10 randomly generated codes the one that is given in the session they are attending.

In this way, the participation of each student is recorded and tied to the specific session associated with the selected code. The same site handles registration and dispenses the students’ access to temporary accounts on computing resources they will use during the week, and contains the teaching materials.

Certificates… Having recorded the attendance from the participants, this is just the beginning of the process of issuing the certificates. After this, we have scripts that can identify the participants that would be awarded a certificate of participating according to the criteria stated before, and generate a PDF document stating that. Years ago, we use to run through the university campus on the last day to print hard-copies of these, but since last year we send the participants an electronic version of it. The number of certificates demonstrates the growth in attendance over the years: In 2014 we awarded 30 attendees with summer school certificates, in 2019, this number has grown to 159.

Financial support… One remarkable thing about the school is that we are able to continue offering this high-quality and relevant training free of cost to the participants. This is not a easy task to achieve, as there are several costs associated to the event. The cost of the instructors is absorbed by the partnering organization (SciNet, SHARCNET and CAMH), while logistic costs for the rooms and AV utilizations are covered by SciNet, while coffee breaks that are provided to the participants were sponsored by Compute Ontario.

Other centres have decided to charge their participants a modest registration fee for their summer school, which allows them to tackle two things: one is to alleviate the cost associated with the event itself; and secondly, to reduce the number of no-shows during the school. Fortunately our attendance numbers have been rising steadily every year, but our turn-out rate seems to be steady and predictable at 70%, making the no-show effect non-issue.

More summer activities…

SciNet also participates in the International HPC Summer School, sending a few instructors and 10 students to this competitive one-week program every year.

Last but not least, SciNet finished this year’s summer season co-organizing and hosting a “virtual” remotely hosted one week-long PetaScale Computing Institute at the end of August.

Although physically and intellectually exhausted, we finished one of the busiest summer seasons ever in SciNet’s training and education history, allowing us to keep pushing ourselves and re-charge of our energies for the beginning of the academic year.

Further details and information about SciNet’s education and teaching endeavours can be found in the following link: